Problem

Use SSIS to extract, transform and load data from a SharePoint list into a SQL Server table. Extraction from the SharePoint lists is done in a Data Flow Task by means of the OData Source for SSIS 2012. The Data must be dynamically extracted, i.e. only List data from the last <n> days must be read.

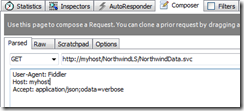

The OData Source Editor connects to the SharePoint REST service.

The URL of the REST service: http://myserver/_vti_bin/ListData.svc

A name after the URL of the service returns SharePoint Foundation list data in XML format as a standard Atom feed: http://myserver/_vti_bin/ListData.svc/Incidents

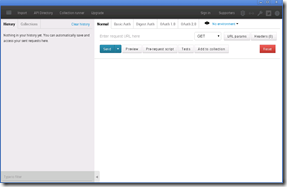

.In the OData Source Editor:

To make a selection of data created since 2014-08-04, the Filter is set to : $filter=Created+gt+datetime'2014-08-04'

There is no way to add a parameter in the Query options text box. How can the filter on the Created date be made variable so that only the last 5 days are read for a daily upload.

Solution

There are no expressions for the Filter property in the OData Source properties. However, in the Data Flow Task that contains the OData Source, we have Expressions that can be used to dynamically set the Query property of the OData Source.

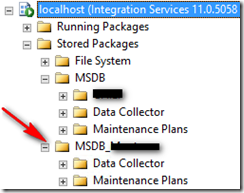

First of all create a Project parameter called ‘LoadDays’ of type Int32 with the value 10.

In the SSIS package add a variable called ‘LoadCreated’ of type DateTime, with an expression to subtract 10 days from the current date: DATEADD( "d", - @[$Project::LoadDays] , GETDATE() )

In the Control Flow pane, select the Data Flow Task that contains the OData Source.

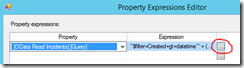

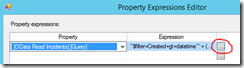

In the Properties Window, click on the ellipsis button of the Expressions property.

In the Property Expressions Editor window, in the Property column select the OData Source Query property, click on the ellipsis button to open the Expression Builder.

The expression we create will need to mimic an OData filter expression like $filter=Created+gt+datetime'2014-08-04'

In the expression builder, the expression is:

"$filter=Created+gt+datetime'" + (DT_WSTR, 4)YEAR(@[User::LoadCreated]) + "-" + ( MONTH( @[User::LoadCreated]) < 10 ? "0" : "") + (DT_WSTR,2)MONTH( @[User::LoadCreated]) + "-" + (DAY( @[User::LoadCreated])<10 ? "0" : "" ) + (DT_WSTR,2)DAY( @[User::LoadCreated]) +"'"

The OData filter does not accept date literals like ‘201-8-4’, we need to add a leading zero for the month and day part, hence the conditional operator ( MONTH( @[User::LoadCreated]) < 10 ? "0" : "")

Click OK to close the Expression builder.

Click OK to close the Property Expressions Editor.

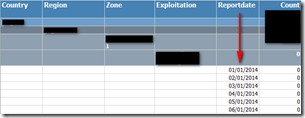

With the Data flow task selected, click the Data Flow tab. Select the OData Source control and edit. The filter property now holds the calculated values of the expression:

$filter=Created+gt+datetime'2014-08-04'

When the SSIS package runs it will only load list data created after the calculated date.

![clip_image002[5] clip_image002[5]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjpX4sRWN3XZxjMpT6gB-BbfJxuWKkNFAtx8IWCxdoa_Cl0_46p0M0PcU9xhBj0e1eizNc8Q8Tdw5G1n_Hru45PjcdEjAthKVBDSMAMkOSNKem_ejQU3QvU_lwNBtKNncgf0DY/?imgmax=800)

![clip_image003[4] clip_image003[4]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgnfpHzGoT3NLuywrHDGByymA_XyECqlA2QLBQHZSEg9qOFGxgDfm1iFQscEnBdJ_NGgBTk4naEKn8v4A3LAwjBwBZ6NAzp-El_lf96UwYKTubny4_Gu4vIPPFO0j1J1XtpyTs/?imgmax=800)